AI Has an Energy Problem, and Enterprises Need to Pay Attention by Mark Hewitt

The enterprise AI race is accelerating at extraordinary speed. Organizations are investing aggressively in generative AI, intelligent automation, advanced analytics, and autonomous systems. Boards are demanding AI strategies. Executives are under pressure to demonstrate innovation. Technology teams are scaling infrastructure at unprecedented rates. But underneath the momentum is a growing reality few leaders are openly discussing: AI consumes enormous amounts of energy.

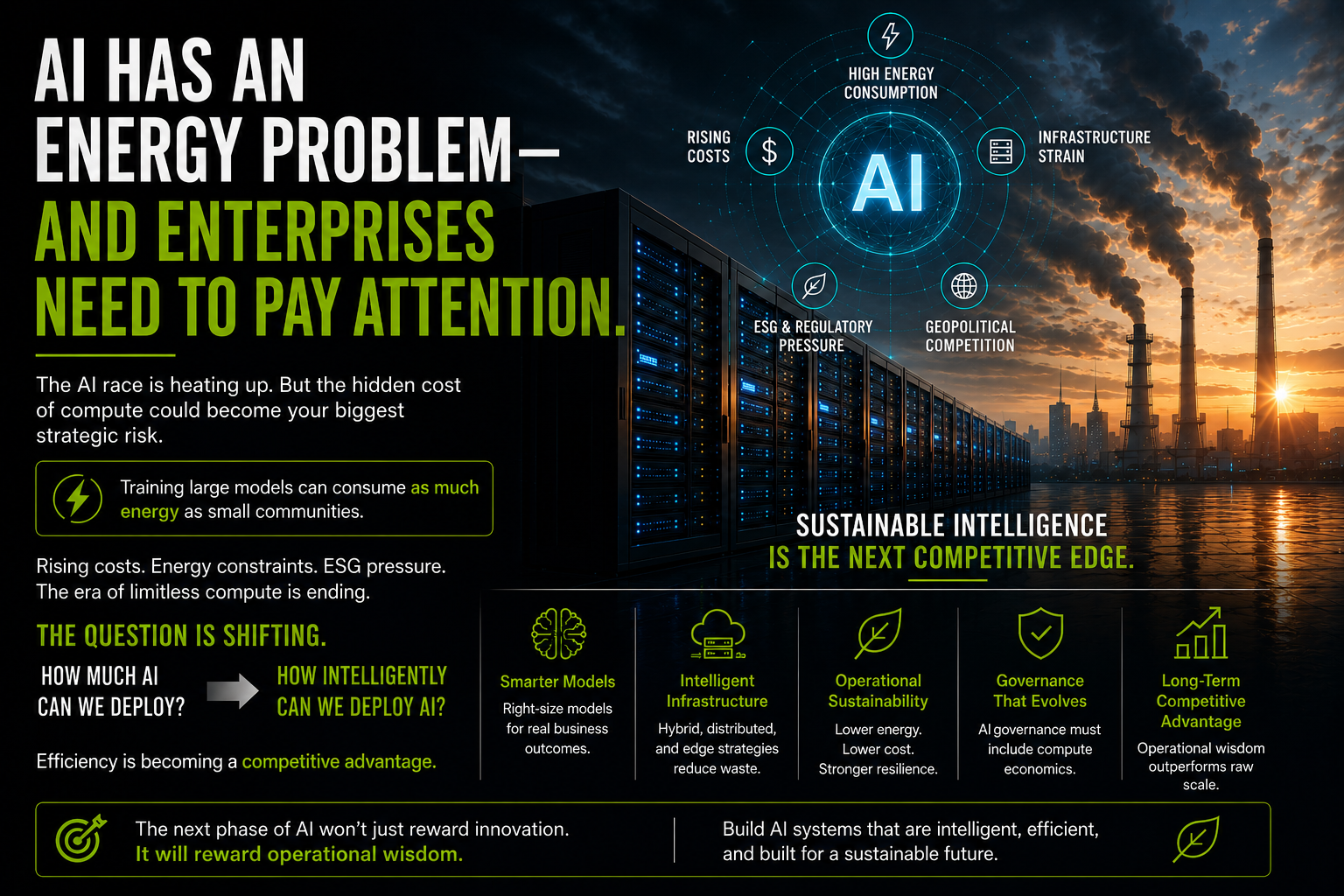

The modern AI economy is built on compute-intensive infrastructure that requires massive electrical power, sophisticated cooling systems, and increasingly expensive hardware environments. Training large language models can consume as much energy as small communities. Inference workloads running continuously across enterprises multiply that demand even further.

For now, many organizations are absorbing these costs in pursuit of competitive advantage. But eventually, economics matter. The assumption that enterprises can endlessly scale compute without operational consequences is unlikely to hold. Rising infrastructure costs, energy constraints, ESG pressures, and geopolitical competition around power availability are beginning to reshape how organizations think about AI deployment.

This is where the enterprise AI conversation becomes more strategic. Leaders of the next decade may not simply be the companies with the largest models or the biggest infrastructure footprints. They may be the organizations that learn how to operate intelligent systems efficiently, sustainably, and resiliently.

That changes the discussion entirely. Instead of asking, “How much AI can we deploy?,” executives may soon begin asking, “How intelligently can we deploy AI?” This shift is already influencing enterprise architecture decisions. Organizations are exploring smaller specialized models, localized AI inference, hybrid infrastructure strategies, and edge-based computing approaches that reduce both latency and energy consumption. Efficiency is quietly becoming a competitive advantage.

The implications extend beyond technology. Energy availability may eventually influence where AI infrastructure is built, which industries can scale fastest, and how governments regulate compute-intensive systems. AI strategy is increasingly becoming infrastructure strategy. For enterprise leaders, this creates a new responsibility. AI governance can no longer focus solely on ethics, privacy, and model risk. It must also include operational sustainability and compute economics. Organizations that ignore this reality may find themselves building expensive, difficult-to-scale AI ecosystems with unclear long-term economics.

Organizations that adapt early may build something far more valuable: sustainable intelligence. The next phase of AI transformation will not simply reward innovation. It will reward operational wisdom. And that may become the true differentiator of the AI era.