Organizational Design for AI: Teams, Practices, Portfolio Model by Mark Hewitt

Enterprise AI adoption is accelerating. Most organizations now have a mix of pilots, production use cases, and early experiments with agents. Many have selected platforms, signed vendor contracts, and initiated AI enablement programs.

Yet most enterprises struggle to scale AI beyond pockets of success. The reason is not model capability. The reason is organizational design. AI scale is not a technology problem alone. It is an operating model problem. AI touches multiple domains simultaneously. Data, systems, security, governance, delivery workflows, customer experience, and compliance are all implicated. If the enterprise does not design teams, practices, and portfolio governance intentionally, AI becomes fragmented. Progress becomes inconsistent. Risk becomes uneven. Cost rises.

The enterprises that win will not be those with the most tools. They will be those with the clearest AI operating model.

Why AI Scale Creates Organizational Failure Modes

Enterprises experience four predictable failure modes when AI adoption grows without organizational design.

Tool sprawl. Different business units adopt different tools, vendors, copilots, and agent frameworks. Over time, integration becomes difficult and cost rises.

Inconsistent governance. Policies exist, but enforcement varies by team. Some systems are monitored and auditable. Others are not. Risk becomes uneven.

Confused ownership. When AI-enabled workflows fail, no one owns the outcome. Business blames technology. Technology blames data. Compliance blames lack of process. Accountability erodes.

Duplication and waste. Teams build similar capabilities independently. Data pipelines are repeated. Prompt libraries diverge. Monitoring standards vary. The enterprise pays repeatedly for the same work.

These are not technical failures. They are organizational failures. AI needs a deliberate structure that prevents these patterns and enables repeatable delivery with governance.

The Executive Goal: An AI Operating Model

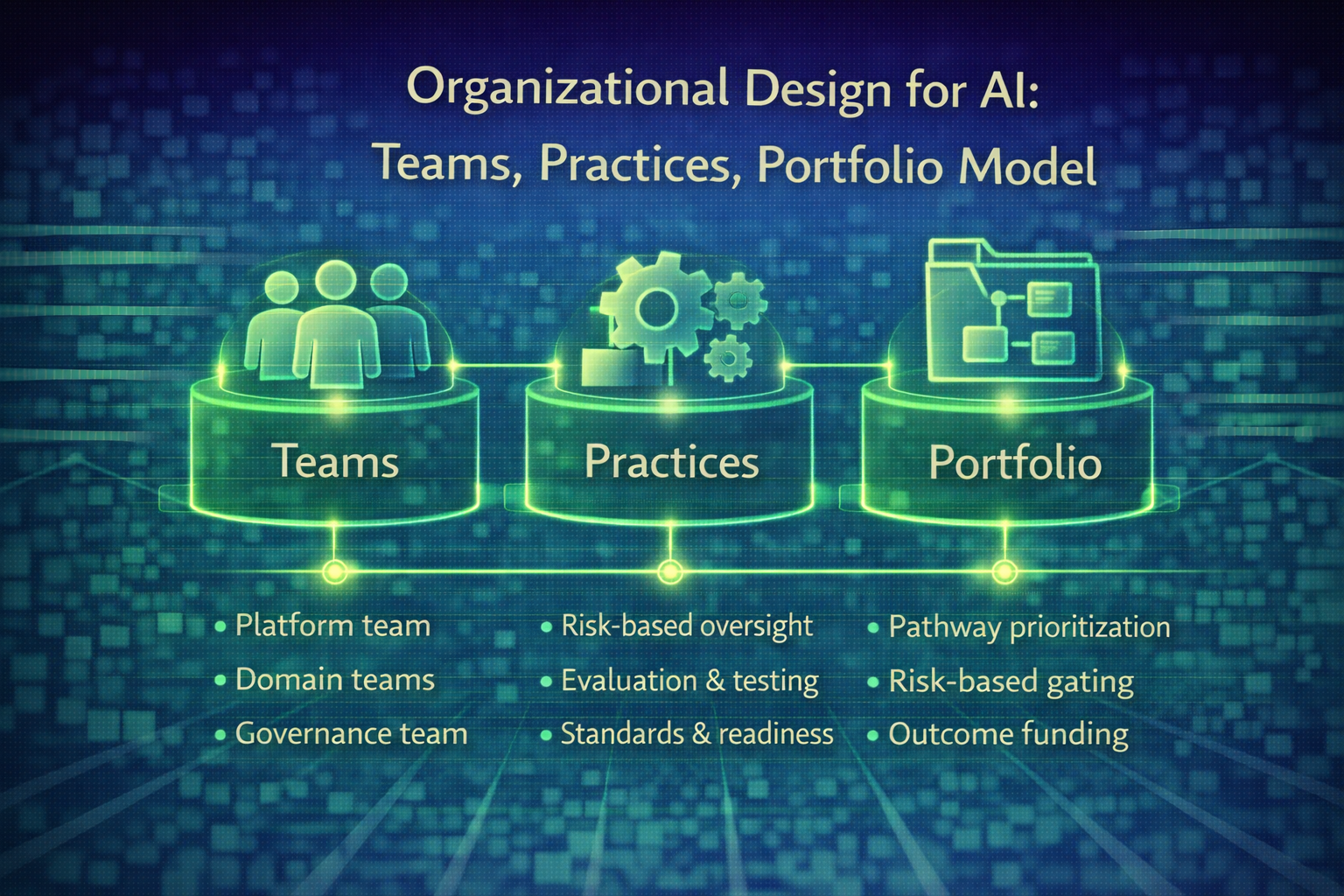

Executives should treat AI as a capability that must be built into the enterprise operating model. This operating model has three parts:

teams and responsibilities

practices and standards

portfolio governance

When these are designed deliberately, scaling becomes manageable. When they are not, scaling becomes chaotic.

Part 1: Teams and Responsibilities

A clear ownership model is the foundation of AI scale. Enterprises need a team structure that balances central enablement with distributed innovation. A practical model includes four key components.

1. An AI Platform and Enablement Team

This team owns shared capabilities.

approved tools, platforms, and patterns

identity and access models for AI and agents

shared libraries and templates

evaluation and testing frameworks

runtime monitoring and evidence capture systems

standards for prompts, retrieval, and agent workflows

developer experience and onboarding

This team reduces duplication and ensures consistency. It should not build every use case. It should make it easier for others to build use cases correctly.

2. Domain Product and Delivery Teams

These teams build AI-enabled workflows in business units.

customer experience workflows

finance and operations workflows

compliance workflows

engineering workflows

sales and revenue workflows

These teams own outcomes and adoption in their domain. They should work within enterprise standards and governance controls. They are the drivers of business value.

3. A Governance and Risk Function

This function ensures enterprise-level oversight.

policy definition

risk tiering and oversight models

compliance requirements

audit readiness expectations

model risk management requirements

escalation pathways

exception handling and approval boards for high-risk workflows

This function must work closely with the platform team and domain teams. Governance that is separated from engineering and operations becomes slow and ineffective.

4. Data and Architecture Stewardship

AI success depends on data trust and system integrity.

Data governance and architecture stewardship must be aligned to AI adoption. This includes:

authoritative sources and data definitions

lineage and metadata standards

quality measurement and drift detection

secure retrieval and classification

dependency mapping for critical pathways

Enterprises that separate AI initiatives from data and architecture realities will struggle to scale.

Part 2: Practices and Standards

AI cannot scale without repeatable practices. Executives should expect the enterprise to standardize around practices that make AI reliable, governable, and secure. Key practices include:

1. Risk-tiered oversight models

Every AI workflow should be assigned to an oversight tier:

human-in-the-loop

human-on-the-loop

human-out-of-the-loop

This ensures consistent governance and avoids ad hoc decisions.

2. Standardized evaluation and testing

AI needs testing that reflects real workflows. Standards should include:

regression testing for prompt and model updates

evaluation sets tied to domain use cases

adversarial testing for safety and injection

bias and fairness evaluation where relevant

performance and reliability testing under load

3. Standardized observability and evidence capture

Executives should require every AI system to produce:

traceability of decisions and actions

data sources and retrieval context

tool usage logs for agent workflows

confidence thresholds and escalation events

audit evidence automatically

This makes governance scalable and reduces manual compliance overhead.

4. Prompt, retrieval, and agent design standards

Without standards, every team builds differently. Risk increases and reuse declines. Standards should include:

prompt libraries and version control

retrieval sourcing and classification rules

tool allowlists and permission boundaries

action constraints and prohibited operations

kill-switch and rollback mechanisms

5. Operational readiness and incident response

AI systems must have operational ownership and incident response playbooks. AI failures are production failures. They require:

monitoring

escalation thresholds

rollback capability

post-incident remediation processes

AI cannot scale without operational discipline.

Part 3: Portfolio Model

AI scale requires enterprise prioritization. Many enterprises allow AI initiatives to spread through enthusiasm. Teams build what they want. Pilots grow without strategic sequencing. Resources spread thin. Results become inconsistent. Executives should instead create an AI portfolio model, similar to an investment portfolio. A practical portfolio model includes:

1. Pathway-based prioritization

Prioritize AI use cases by enterprise pathways:

revenue and customer pathways

operational continuity pathways

compliance and risk pathways

workforce productivity pathways

This ensures AI investment aligns to critical enterprise outcomes.

2. Risk-based gating

High-risk AI workflows require stronger governance and slower expansion. Low-risk workflows can scale more quickly. Risk-based gating prevents uncontrolled autonomy.

3. Standard adoption patterns

Use cases should be delivered through repeatable patterns and templates. This reduces cost and increases reliability.

4. Outcome-based funding

Fund AI initiatives based on measurable outcomes, not experimentation volume. Tie funding to operational, financial, and talent metrics.

5. Executive scorecard reporting

Executives should review:

value delivered by workflow category

governance coverage and risk tier distribution

drift and reliability indicators

adoption trends

operational impact and cost savings

talent impact and toil reduction

This turns AI adoption into an executive-managed capability rather than an uncontrolled trend.

The Executive Principle: Centralize Standards, Decentralize Value

The most successful AI operating models follow one principle: Centralize standards and governance. Decentralize value creation. Central teams define controls, patterns, and platform capabilities. Domain teams deliver outcomes using those standards. This structure prevents fragmentation while enabling innovation.

Take Aways

AI will not scale through tools alone. It scales through organizational design. Enterprises that treat AI adoption as a technology rollout will experience tool sprawl, inconsistent governance, unclear ownership, and duplicated effort. Enterprises that design teams, practices, and portfolio governance deliberately will scale AI with control, trust, and measurable outcomes.

Organizational design is the differentiator. The question is not whether you have adopted AI. The question is whether your organization is designed to scale it responsibly.